Two case studies in how familiar local utilities can become security primitives inside automated processing pipelines: remote resource loading in macOS textutil, and crafted KDBX-supplied KDF cost in KeePassXC.

Trusted tools are often treated as passive infrastructure.

They sit inside scripts, CI jobs, backend workers, importers, indexing systems, migration utilities, and security workflows. Because they are mature, local, and familiar, engineers often assume they are safe building blocks: deterministic, offline, bounded, and cheap to run.

That assumption is dangerous.

A tool can be mature and still have side effects. It can be working as designed and still become security-relevant. It can be safe in an interactive desktop workflow and risky when placed inside an automated pipeline that processes attacker-controlled input.

This article is not about exotic 0days. It is about a quieter class of security problems: correct behavior crossing the wrong trust boundary.

Offline-looking tools are not always offline-safe.

We will look at two examples:

- macOS textutil, a trusted local conversion utility that may fetch remote resources when converting HTML.

- KeePassXC, a password manager where database-controlled KDF parameters can dramatically increase computation cost.

The behaviors are different. The lesson is the same:

When untrusted input enters automation, every parser becomes an attack surface and every feature becomes a possible primitive.

Introduction: The Bug Is Not Always in the Tool

Security research often focuses on binary outcomes: memory corruption, authentication bypass, parser crashes, or code execution. That focus is valid, but incomplete.

Many real incidents begin elsewhere: with assumptions.

Teams trust local tooling because it is familiar. They trust workflows because they are internal. They trust conversion and processing stages because they look mechanical. But trust boundaries are not defined by where a binary is installed. They are defined by who controls input and what side effects that input can trigger.

The security issue is not always a broken binary. Sometimes the issue is a correct feature operating in the wrong context.

Test Environment Snapshot

The research was performed in a controlled local environment using differential test inputs and repeatable command-line workflows.

- Host OS: macOS 26.3 (Build 25D125)

- textutil path: /usr/bin/textutil

- textutil context: Apple system binary shipped with the host OS

- KeePassXC CLI: local 2.8.0-snapshot source build

- Test style: controlled local listener, differential control/test inputs, repeated timing runs

Important note: the KeePassXC behavior should be verified on an official release build before framing it as a vendor vulnerability candidate. In this article, it is treated as a research case study around cost boundaries and attacker-controlled metadata, not as a confirmed vendor advisory.

The Pattern: Correct Feature, Wrong Trust Boundary

Both case studies follow the same pattern:

- A behavior is functionally valid in isolation.

- Attacker-controlled input can influence that behavior.

- The behavior executes inside a trusted automation context.

- Security impact appears at the integration level.

This is why “working as intended” and “security-relevant” are not mutually exclusive.

The vulnerability is often not the tool. The vulnerability is the assumption around the tool.

Case Study I: textutil and Network-Active Text Conversion

Why textutil?

/usr/bin/textutil is commonly used to normalize or convert content in local scripts and backend processing workflows. It is a trusted local utility and is often treated as offline-safe by default.

That assumption deserves testing.

HTML is not just text. It can reference remote resources such as images, stylesheets, fonts, and imports. If a conversion tool resolves those references during processing, then conversion is no longer purely local. It becomes network-capable behavior.

In a desktop context, that may be acceptable. In a backend worker that processes untrusted input, it changes the threat model.

Threat Model

The relevant threat model is straightforward:

- An attacker controls or influences HTML input.

- A pipeline automatically runs textutil -convert txt.

- The conversion host has outbound network access.

- The system assumes conversion is an offline parsing operation.

If remote references are resolved during conversion, attacker input can influence outbound requests from trusted infrastructure.

That creates an SSRF-style integration primitive, even if textutil itself is working as designed.

PoC: HTML Conversion Triggering HTTP Requests

Controlled differential tests showed the following behavior:

- Plain text or local-only HTML generated no network activity.

- HTML containing remote <img> and <link rel="stylesheet"> references triggered outbound HTTP requests.

Representative output from the harness:

plain_txt rc 0 new_hits 0

html_no_remote rc 0 new_hits 0

html_remote_img rc 0 new_hits 1

html_remote_css rc 0 new_hits 1

all_hits ['/img1.png', '/style.css']

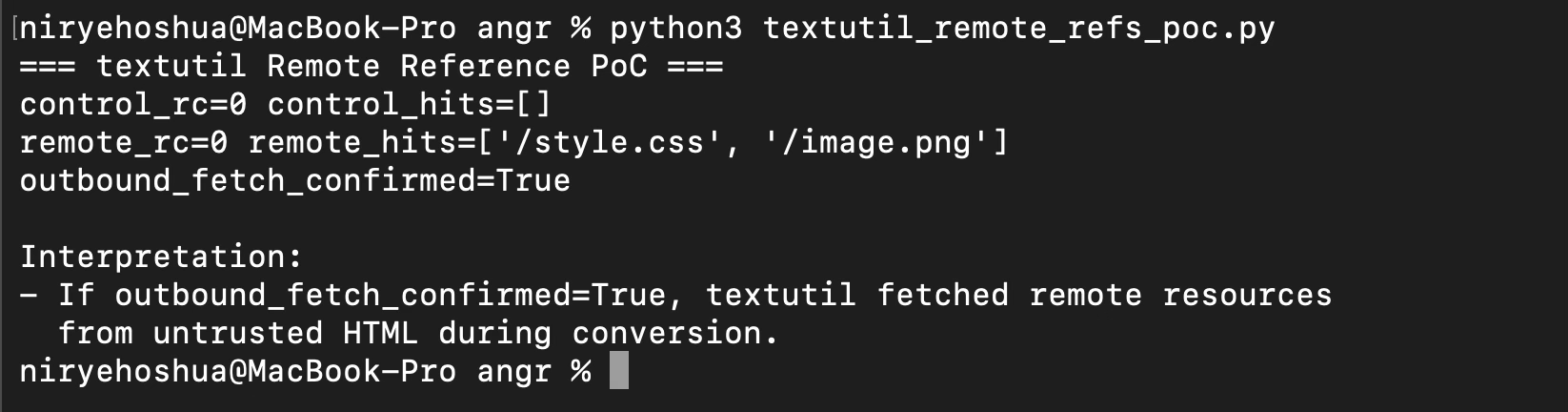

Figure 1: Differential textutil test showing no network activity for control input and outbound requests for HTML containing remote references.

Reproduction

The following minimal test is sufficient to reproduce the behavior:

- Start a local HTTP listener.

- Create one HTML file without remote references.

- Create another HTML file with external img and link rel="stylesheet" references.

- Run the same textutil -convert txt command on both files.

- Compare listener hits.

The important result is the differential behavior: the listener receives no requests for the control input and receives requests only when remote references are present.

#!/usr/bin/env python3

import http.server

import socketserver

import subprocess

import tempfile

import threading

import time

from pathlib import Path

PORT = 8765

hits = []

class Handler(http.server.BaseHTTPRequestHandler):

def do_GET(self):

hits.append(self.path)

self.send_response(200)

self.end_headers()

self.wfile.write(b"ok")

def log_message(self, fmt, *args):

return

def run_server():

with socketserver.TCPServer(("127.0.0.1", PORT), Handler) as httpd:

httpd.serve_forever()

threading.Thread(target=run_server, daemon=True).start()

time.sleep(0.2)

with tempfile.TemporaryDirectory() as td:

td = Path(td)

control_html = td / "control.html"

remote_html = td / "remote.html"

control_html.write_text("""

<html>

<head><title>Control</title></head>

<body><p>No remote references here.</p></body>

</html>

""")

remote_html.write_text(f"""

<html>

<head>

<link rel="stylesheet" href="http://127.0.0.1:{PORT}/style.css">

</head>

<body>

<p>Remote reference test.</p>

<img src="http://127.0.0.1:{PORT}/image.png">

</body>

</html>

""")

control_out = td / "control.txt"

remote_out = td / "remote.txt"

before = len(hits)

control = subprocess.run(

["/usr/bin/textutil", "-convert", "txt", str(control_html), "-output", str(control_out)],

capture_output=True,

text=True,

)

control_hits = hits[before:]

before = len(hits)

remote = subprocess.run(

["/usr/bin/textutil", "-convert", "txt", str(remote_html), "-output", str(remote_out)],

capture_output=True,

text=True,

)

remote_hits = hits[before:]

print("control_rc=", control.returncode)

print("control_hits=", control_hits)

print("remote_rc=", remote.returncode)

print("remote_hits=", remote_hits)

print("outbound_fetch_confirmed=", bool(remote_hits))Expected output:

control_rc=0

control_hits=[]

remote_rc=0

remote_hits=['/style.css', '/image.png']

outbound_fetch_confirmed=TrueWhy This Is Not Necessarily a textutil Vulnerability

By itself, this behavior can be interpreted as expected rich-content handling rather than a binary defect.

It should usually not be framed as:

- “memory bug in textutil”

- “standalone macOS vulnerability”

- “Apple 0day”

It is better framed as:

- an integration risk when untrusted HTML is processed with network-capable conversion behavior;

- a reminder that conversion tools may have side effects;

- a server-side request primitive if placed inside backend automation.

This distinction matters. It keeps the analysis accurate and avoids overstating the claim.

When This Becomes SSRF-Style Risk

In automation and backend environments, converter-side requests are server-side behavior.

If attacker-controlled HTML can influence remote references, and the conversion worker can reach internal or external network destinations, the conversion step becomes a request primitive.

Potential outcomes include:

- probing internal endpoints;

- reaching localhost-only services from the worker;

- creating unexpected egress from trusted processing infrastructure;

- bypassing assumptions that document conversion is offline-only.

The important point is not that every textutil invocation is dangerous. The point is that automated untrusted-input conversion must be modeled as active processing with potential side effects.

Mitigations for Network-Active Conversion

Recommended layered controls:

- Use -noload in untrusted-input conversion paths.

- Run conversion workers in sandboxed environments.

- Enforce deny-by-default egress filtering.

- Sanitize remote-bearing HTML constructs before conversion.

- Keep trusted and untrusted conversion paths separate.

- Monitor unexpected outbound traffic from conversion workers.

Mitigation Validation: -noload

The same remote-reference input can be replayed with -noload:

/usr/bin/textutil -noload -convert txt /tmp/remote.html -output /tmp/remote.txtExpected behavior in this model:

- conversion can still succeed for text extraction use cases;

- listener hit count should remain unchanged during this run;

- the outbound fetch side effect should be removed for the tested pathway.

This mitigation should be validated on the exact OS version and input formats used by the deployment.

Case Study II: KeePassXC and KDF Cost Boundaries

Password Managers Are Designed to Be Slow

KDF cost in password managers is intentionally high. That is not a flaw. It is a core defensive property.

A password manager should make offline guessing expensive. Every password attempt should require meaningful computation. Over time, as hardware improves, KDF parameters may need to increase to preserve the same defensive cost.

The problem is not that KeePassXC performs expensive derivation work.

The problem is whether attacker-controlled database metadata can silently impose unusually expensive work before the application meaningfully warns, rejects, or bounds the operation.

KDBX Metadata as a Cost-Control Surface

The observed slowdown correlated with KDF parameters supplied by the crafted KDBX file, specifically the elevated transform-round value.

In practical terms, a small metadata-level difference produced a large runtime difference in the tested workflow.

This makes the KDBX file more than a passive data container. In the tested path, it can supply parameters that influence how much computation the application performs.

That creates a boundary problem:

- user-selected high KDF cost is legitimate;

- attacker-supplied pathological KDF cost is a resource-governance concern.

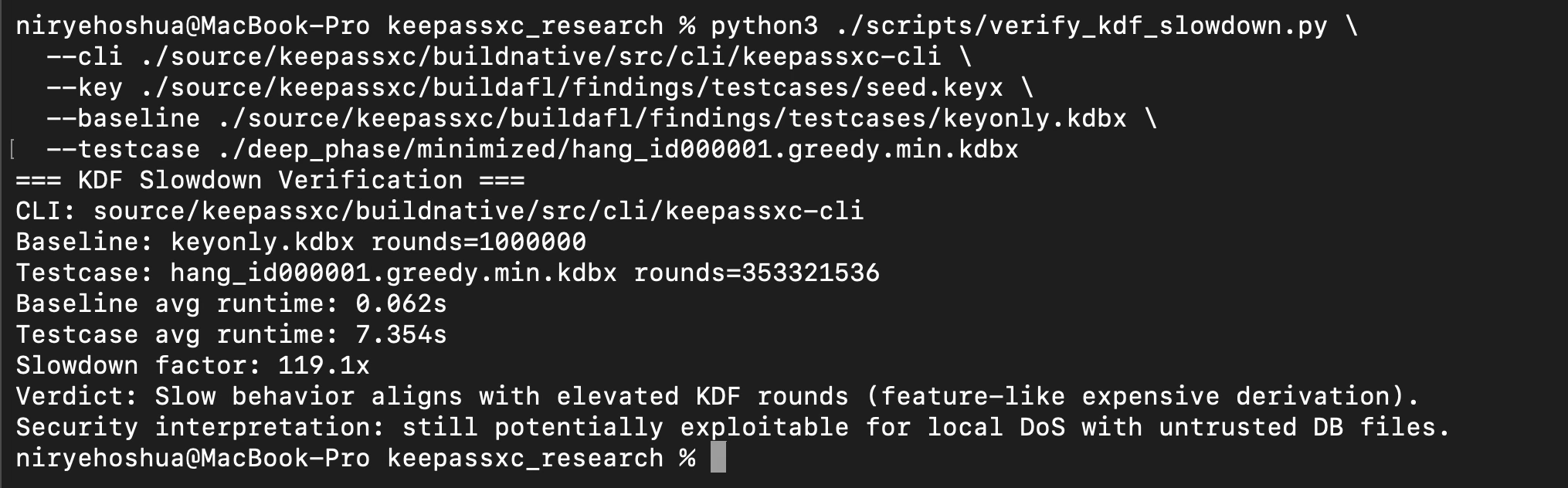

PoC: Baseline KDBX vs Crafted High-Rounds Testcase

Observed values in reproducible testing:

- Baseline database transform rounds: 1,000,000

- Crafted testcase transform rounds: 353,321,536

Observed runtime impact:

- Baseline: approximately 0.05-0.07s

- Crafted testcase: approximately 7.3-7.7s

- Slowdown: approximately 100x+

This is not an infinite loop. It is a high-cost computation path.

Reproduction

The test compares a normal KDBX file against a minimized crafted testcase using the same CLI binary, the same key material, and repeated runs.

The purpose is not to show a crash. The purpose is to show that a small metadata difference can produce a large runtime difference.

Figure 2: KeePassXC KDF timing comparison showing elevated transform rounds and approximately two orders of magnitude slowdown.

Local paths were normalized for publication. The original test used the same binary, the same key material, and repeated timing runs across both inputs.

Interpretation:

- baseline rounds observed around 1,000,000;

- crafted testcase rounds observed around 353,321,536;

- repeated runtime slowdown was around two orders of magnitude.

The minimized KDBX testcase is not included as a weaponized artifact in this article. The goal is to document the methodology and boundary condition, not to distribute a denial-of-service sample.

Figure 3: Testcase layout showing that the slowdown is driven by KDF parameters rather than large file size.

Why This Is Not a Cryptographic Break

No cryptographic primitive was broken.

This research does not show:

- password disclosure;

- key recovery;

- authentication bypass;

- decryption without credentials;

- database integrity failure.

The finding is about resource-consumption control: attacker-influenced input can push expensive-by-design logic into costly operational behavior.

The cryptography can be sound while the surrounding resource policy is still worth discussing.

When This Becomes Resource-Consumption Risk

Impact rises in contexts such as:

- automated opening or validation workflows;

- batch processing;

- repeated retries or fan-out processing;

- constrained worker environments;

- GUI flows where users receive no warning before expensive derivation begins;

- security or inventory tools that inspect KDBX files at scale.

For a single user manually opening one database, the impact may be low. For automated systems processing many files, the same behavior can become operationally meaningful.

Guardrails for Expensive-by-Design Logic

The goal is not to weaken password database security. The goal is to make expensive operations intentional.

Possible guardrails include:

- Apply maximum accepted KDF parameter thresholds.

- Add explicit warnings or confirmation for extreme values.

- Enforce bounded processing time or resource budgets per file.

- Isolate untrusted-file processing from critical interactive paths.

- Provide a safe mode for CLI and automation workflows.

- Log high-cost KDF metadata without logging secrets.

A reasonable warning could look like this:

This database uses unusually expensive key-derivation parameters.

Opening it may consume significant CPU time.

Continue?

For CLI workflows, an explicit mode could distinguish safe automation from deliberate high-cost processing:

keepassxc-cli --safe-kdf open database.kdbx

keepassxc-cli --allow-high-kdf open database.kdbxThese examples are design suggestions, not claims about current KeePassXC command-line options.

Why These Are Not Traditional Vulnerability Claims

Neither case study depends on proving that the underlying tool is defective.

- In the textutil case, remote resource loading may be valid rich-content behavior.

- In the KeePassXC case, expensive KDF work is a core security property.

The security lesson comes from context. When these behaviors are exposed to attacker-controlled input inside automation, they become primitives: one for network activity, the other for computation cost.

The non-claim framing is important because it prevents the wrong conclusion. The next question is what pattern remains once traditional vulnerability claims are removed.

Common Pattern Across Both Cases

At first glance, these behaviors seem unrelated.

- textutil: attacker-controlled input influences network activity.

- KeePassXC: attacker-controlled input influences CPU cost.

One turns input into outbound requests. The other turns input into expensive computation.

Both are input-controlled behavior primitives inside trusted tools. Both become security-relevant when placed inside automation.

The common pattern is not exploitation in the traditional sense. It is capability exposure across a trust boundary.

Engineering Checklist

Use this checklist for any trusted local tool placed inside backend pipelines:

- Input trust: Is any part of the input attacker-controlled?

- Side effects: Can processing trigger network, file, or process side effects?

- Resource boundaries: Are CPU, memory, time, disk, and network budgets enforced?

- Isolation: Is tool execution sandboxed and least-privileged?

- Egress policy: Is outbound traffic deny-by-default?

- Control tests: Do control vs test inputs prove the claimed behavior?

- Failure framing: Is this a binary flaw or an integration risk?

- Safe mode: Does the tool provide an offline, no-load, or bounded mode?

- Observability: Would unexpected side effects be logged or noticed?

- Separation: Are trusted and untrusted processing paths separated?

Do not threat-model tools by reputation. Threat-model them by behavior.

Limitations and Non-Claims

This research does not claim:

- remote code execution;

- privilege escalation;

- memory corruption in the analyzed binaries;

- cryptographic failure in KeePassXC;

- a guaranteed vendor-accepted CVE claim for either case in isolation.

This is an integration-focused analysis of trust boundaries, side effects, and operational security behavior under attacker-controlled inputs.

The article intentionally avoids sensational framing because the value is not in claiming that the tools are broken. The value is in showing how familiar behavior can become security-relevant when the surrounding system assumes a safer behavior model than reality provides.

Practical Takeaway

Before placing a local utility inside an automated pipeline, ask two questions:

- What can attacker-controlled input cause this tool to do?

- What assumptions does the surrounding system make about that behavior?

If the system assumes “offline” but the tool can fetch resources, that is a boundary problem.

If the system assumes “bounded” but the tool can spend significant CPU based on file metadata, that is a boundary problem.

The tool does not need to be broken for the architecture to be fragile.

Conclusion: Threat-Model Tools by Behavior, Not Reputation

Neither case requires sensational claims to matter.

textutil behavior can be functionally correct and still dangerous in untrusted automation contexts. KeePassXC can be cryptographically sound and still expose resource-consumption risk when cost controls are input-influenced.

This is the broader lesson:

Correct behavior can become security-relevant behavior when it crosses the wrong trust boundary.

For real-world systems, the safe default is:

- assume converters and parsers are active processors, not passive utilities;

- enforce explicit network and compute boundaries;

- validate behavior under attacker-controlled input conditions;

- isolate untrusted processing paths;

- observe and log unexpected side effects.

The safest engineering posture is to assume behavior, not reputation.

Offline-looking tools are not always offline-safe.